4

I. Introduction: A Second Preventable Loss

Seventeen years after Challenger, the Space Shuttle Columbia disaster revealed a painful truth:

The same decision patterns can survive even the lessons of tragedy.

On February 1, 2003, Columbia disintegrated during reentry, killing all seven astronauts. The physical cause was different from Challenger—but the deeper failure was strikingly similar.

Again, the issue was not complete ignorance.

Again, there were warnings.

Again, opportunities to act were missed.

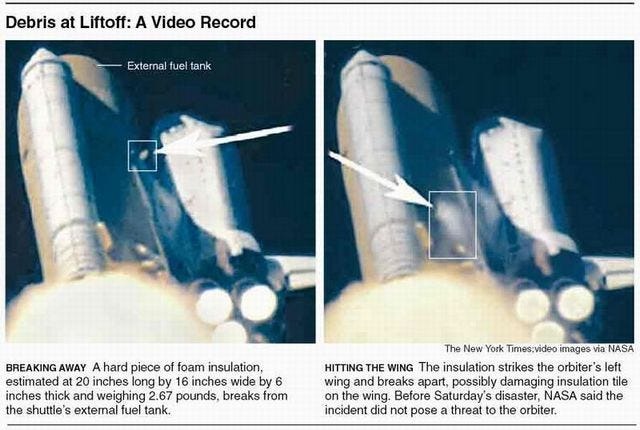

II. The Technical Event: Foam Strike

During launch, a piece of foam insulation broke off from the external tank and struck the shuttle’s left wing.

This was not entirely new:

- Foam shedding had occurred on previous missions

- It had been treated as a maintenance and debris issue, not a catastrophic threat

However, in this case:

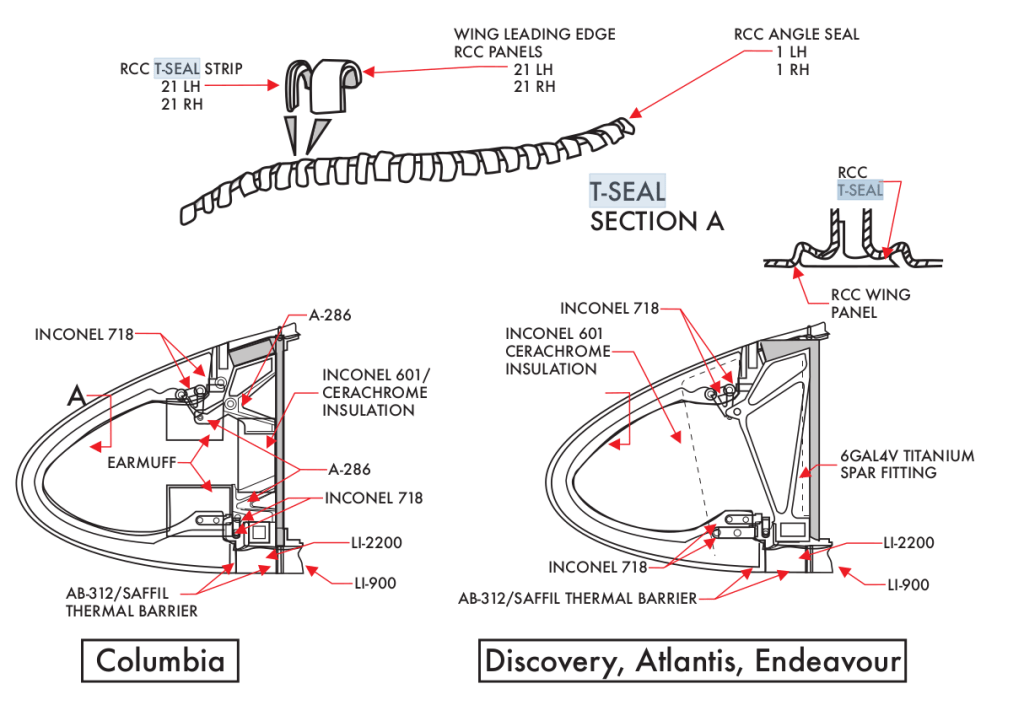

- The foam struck the reinforced carbon-carbon (RCC) leading edge

- It created a breach in the wing’s thermal protection system

At the time, the extent of the damage was unknown—but the possibility of serious damage was imaginable.

III. The Warning Signals

After the strike was observed in launch video:

- Engineers expressed concern about potential damage

- Some requested high-resolution imaging (from military or satellite assets)

- There were discussions about whether the damage could compromise reentry

The key uncertainty was:

Could foam—relatively light material—cause critical damage?

This question was not definitively answered before reentry.

IV. The Decision Environment

NASA management ultimately decided:

- Additional imaging was unnecessary

- The risk was low based on previous experience

- No contingency action was required

Several assumptions shaped this decision:

- Foam had struck the shuttle before without catastrophic outcome

- The shuttle was already in orbit—options were perceived as limited

- Concern was reframed as non-critical

This created a familiar pattern:

Uncertainty was resolved in favor of continuation, not caution

V. The Normalization of Risk

Just as with Challenger, Columbia reveals the danger of normalization of deviance:

- A known anomaly (foam shedding) becomes accepted

- Repeated non-catastrophic outcomes reinforce a sense of safety

- The anomaly is reclassified from “warning” to “routine”

Over time, the question shifts from:

“Why is this happening?”

to:

“Why hasn’t it caused failure yet?”

This is not a scientific conclusion.

It is a psychological adaptation.

VI. Missed Opportunities

Several opportunities existed where the outcome might have changed:

1. Imaging the Damage

High-resolution imaging could have assessed the severity of the strike.

2. On-Orbit Inspection

Further analysis might have clarified the risk.

3. Contingency Planning

Options—though difficult—were not fully explored:

- repair attempts

- rescue mission scenarios

- modified reentry strategies

Instead, the situation was treated as manageable without deeper investigation.

VII. The Moment of Failure

During reentry:

- Superheated air entered the damaged wing

- Internal structural components began to fail

- Temperature sensors showed abnormal readings

- The vehicle lost integrity

Columbia broke apart over Texas.

The imagined risk—damage leading to catastrophic failure during reentry—became reality.

VIII. The Columbia Accident Investigation Board Findings

The investigation concluded:

- The foam strike caused the physical damage

- Organizational culture contributed significantly to the loss

Key findings included:

- Communication breakdown between engineers and management

- Dismissal of concerns due to prior experience

- Overconfidence in the system’s resilience

The report emphasized that NASA’s culture had not fully internalized the lessons of Challenger.

IX. The Same Pattern, Repeated

When placed beside Challenger, the structure is unmistakable:

| Stage | Challenger | Columbia |

| Known issue | O-ring sensitivity to cold | Foam shedding |

| Warning | Engineers advise delay | Engineers request imaging |

| Interpretation | Risk seen as unproven | Risk seen as unlikely |

| Decision | Launch proceeds | No further action |

| Outcome | Explosion after launch | Breakup during reentry |

Different technologies.

Same decision pattern.

X. The Deeper Lesson

Columbia reinforces a central principle:

Risk does not disappear because it is familiar.

In fact, familiarity can make risk more dangerous—because it becomes invisible.

The organization did not lack intelligence or expertise.

It lacked a system that could elevate concern into decisive action.

XI. Conclusion: The Persistence of Human Pattern

The Columbia disaster shows that lessons, even when learned, are not always retained in practice.

The same pressures return:

- schedule

- confidence

- normalization

- incomplete communication

And with them, the same vulnerabilities.

The challenge is not only to learn from failure—but to continuously recognize when the same pattern is re-emerging.

Final Reflection

The Columbia disaster teaches that risk acknowledged but not pursued is risk accepted—and accepted risk, under the wrong conditions, becomes reality.

Leave a comment