4

I. Introduction: A Preventable Catastrophe

The Space Shuttle Challenger disaster stands as one of the most studied technological failures in modern history—not because it was mysterious, but because it was understood in advance.

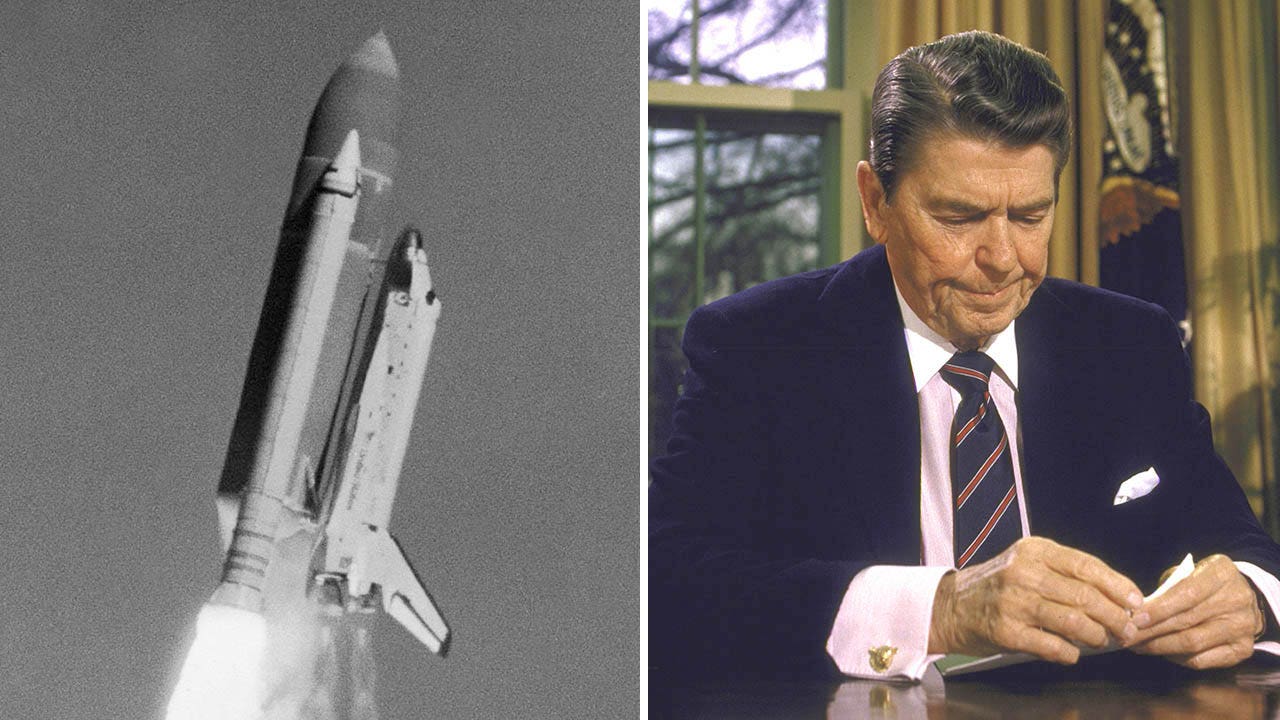

On January 28, 1986, the space shuttle Challenger broke apart 73 seconds after launch, killing all seven crew members. Investigations later showed that the physical cause—the failure of an O-ring seal in the right solid rocket booster—was neither unknown nor unpredictable.

What makes this disaster enduring is not the failure of technology alone, but the failure of decision-making under known risk. The tragedy was not born of ignorance, but of missed opportunities—moments where knowledge existed but did not translate into action.

II. The Technical Problem Was Known

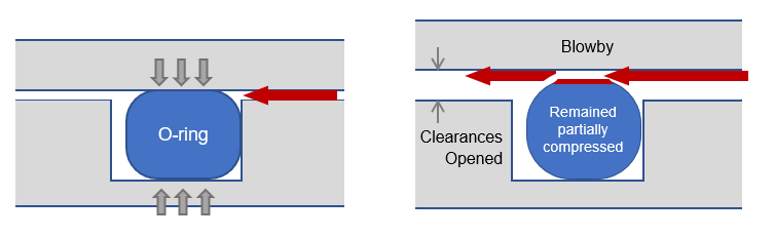

The shuttle’s solid rocket boosters relied on rubber O-rings to seal joints between segments. These O-rings were designed to prevent hot gases from escaping during ignition and ascent.

Engineers, particularly at Morton Thiokol, had observed troubling signs on earlier flights:

- O-ring erosion and blow-by had occurred previously

- Performance appeared sensitive to temperature

- Cold conditions reduced the elasticity of the sealing material

The launch of Challenger was scheduled on an unusually cold morning in Florida. Temperatures at the launch pad were far below those of any previous shuttle launch.

Engineers warned that under such conditions, the O-rings might not seal properly in time to prevent gas leakage.

III. The Warning and the Decision

On the night before launch, engineers participated in a teleconference with NASA officials. They recommended delaying the launch due to low temperatures.

Their concern was clear:

Cold weather increased the probability of O-ring failure.

However, their argument faced resistance. NASA managers questioned the strength of the data and asked for definitive proof that launching would be unsafe.

This created a critical imbalance:

- Engineers were expressing risk and uncertainty

- Management was demanding certainty and proof

In the absence of absolute proof, the recommendation to delay was withdrawn. The decision was made to proceed with the launch.

IV. The Role of Organizational Pressure

The decision cannot be understood without considering the broader environment:

- The shuttle program was under pressure to maintain a regular launch schedule

- Previous anomalies had not resulted in catastrophe, creating a sense of acceptable risk

- There was institutional momentum toward launch

Over time, repeated minor issues had been reinterpreted as normal rather than as warnings. This phenomenon—often called the “normalization of deviance”—allowed increasing risk to be treated as routine.

In such an environment, caution can appear as overreaction, and delay as failure.

V. Evidence Misinterpreted

One of the most striking aspects of the disaster is that evidence of risk already existed.

Previous flights had shown O-ring erosion, especially in cooler conditions. Yet this evidence was interpreted in a way that minimized concern:

- Since catastrophic failure had not yet occurred, the system was assumed to be safe

- Partial failures were treated as acceptable margins rather than warning signs

This reflects a common statistical error:

The absence of failure is not evidence of safety.

Instead of asking, “Why have we seen damage?” the system asked, “Why has nothing failed yet?”

VI. The Moment of Failure

At launch, the cold temperature affected the O-ring’s ability to seal the joint quickly.

Within seconds:

- Hot gases escaped through the joint

- A plume of flame impinged on the external fuel tank

- Structural failure followed

At 73 seconds, the shuttle disintegrated.

The sequence of events closely matched the failure scenario engineers had feared.

VII. The Rogers Commission Findings

The Rogers Commission concluded that the disaster resulted from both:

- Technical failure (O-ring design vulnerability)

- Organizational failure (decision-making processes)

The Commission emphasized that communication between engineers and management had broken down. Concerns were not effectively conveyed, and management did not fully grasp the severity of the risk.

The problem was not simply that warnings existed, but that they did not carry sufficient weight in the final decision.

VIII. Lessons Learned

The Challenger disaster offers several enduring lessons:

1. Risk Must Be Evaluated by Consequence, Not Just Probability

Even a low-probability event becomes unacceptable when the consequence is catastrophic.

2. Past Success Does Not Guarantee Future Safety

Repeated success can obscure growing risk rather than confirm reliability.

3. Technical Expertise Must Be Heard Clearly

Engineers closest to the problem often have the most accurate understanding of risk.

4. Communication Is Critical

Warnings must be expressed in ways that decision-makers can fully understand and act upon.

5. Organizational Culture Shapes Outcomes

Pressure, hierarchy, and expectations can override sound technical judgment.

IX. Conclusion: A Failure of Translation

The Challenger disaster is often described as a failure of engineering or a failure of imagination. In truth, it was a failure of translation:

- Knowledge existed but did not become conviction

- Concern was expressed but did not become decision

- Risk was identified but not acted upon

The tragedy lies in the gap between what was known and what was done.

In that gap, opportunity was lost—and lives were lost with it.

Final Reflection

The Challenger disaster reminds us that understanding risk is not enough.

It must be recognized, communicated, and acted upon—before reality enforces it.

Leave a comment